AI ISP Chips and their role in advanced visual perception in smart vision systems

AI ISP chips use artificial intelligence and advanced ISP technology together. They help smart vision systems make better images. More people want these chips now. The market is worth US$ 354 million in 2024.

AI ISP chips use artificial intelligence and advanced ISP technology together. They help smart vision systems make better images. More people want these chips now. The market is worth US$ 354 million in 2024. It may grow to US$ 1190 million by 2031. New improvements show AI-driven image processing is very accurate. It can find and recognize motion with over 99% accuracy. This helps real-time uses. These changes meet the need for better image perception. Robotics, consumer electronics, and medical devices all need this.

Key Takeaways

-

AI ISP chips use artificial intelligence and image processing together. They help devices see pictures faster and more clearly. These chips make images look better by lowering noise and making colors brighter. They also help devices find objects and scenes right away. AI ISP chips are used in phones, cars, security cameras, and robots. This helps these devices make smarter and safer choices. Developers have problems like high prices, using too much power, and privacy laws. But they keep making chips smaller, quicker, and better at saving energy. The AI ISP chip market is growing fast all over the world. Soon, everyday devices will have even better visual technology.

AI ISP Chips Overview

What Are AI ISP Chips

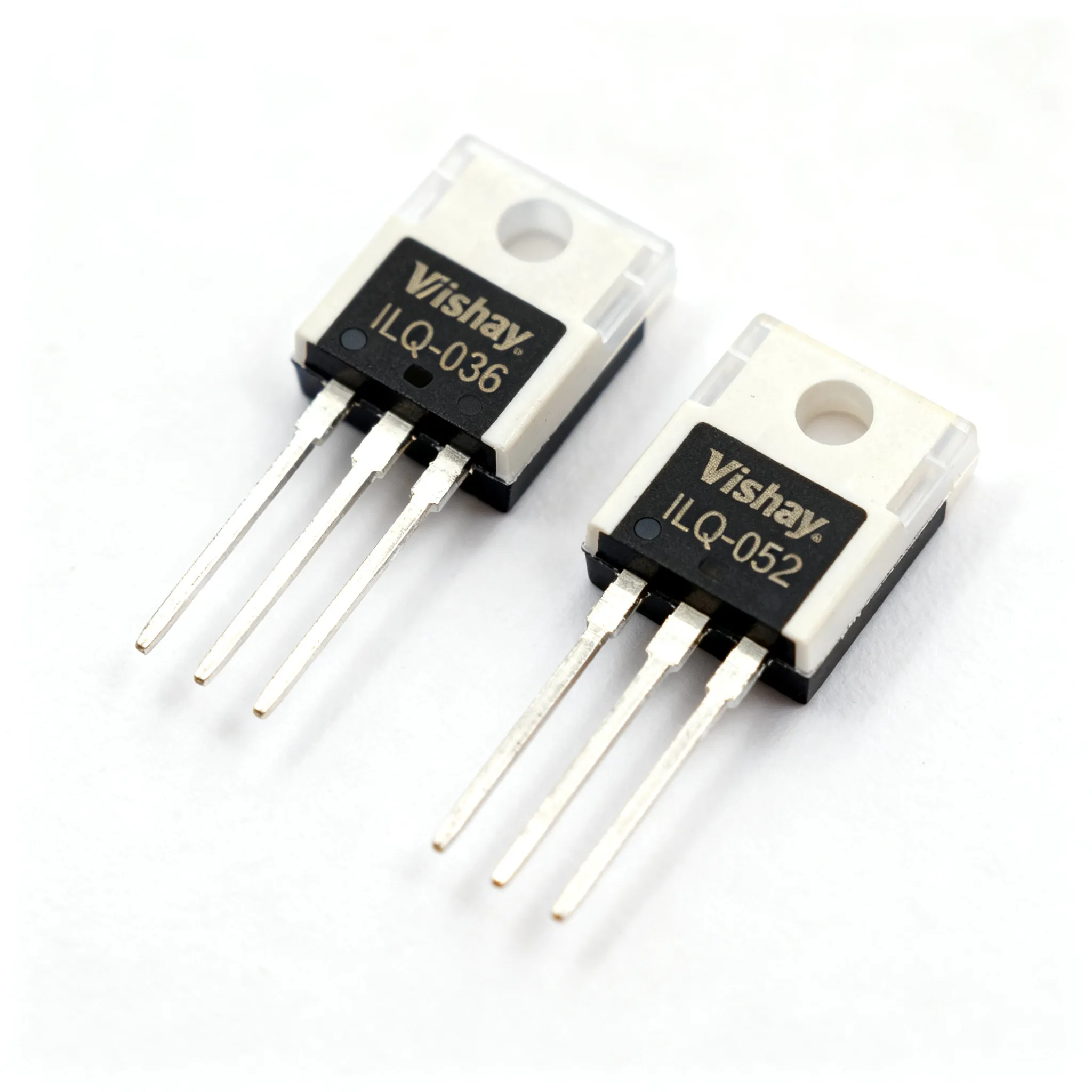

AI ISP chips mix artificial intelligence with image signal processor technology. These chips help smart vision systems work fast and well. An AI ISP chip uses a processor for both image and AI jobs at once. This helps make images better, find objects, and study scenes right away.

A normal AI ISP chip has different parts. It has a neural processing unit, an image signal processor, and sometimes a vision processor. The neural processing unit runs deep learning models. The image signal processor does things like remove noise and fix colors. The vision processor helps with object finding and scene understanding.

Many new devices use AI ISP chips. For example, the Hailo-15 AI Vision Processor can run many deep learning models at the same time. It works with 4K video, high dynamic range, and noise reduction. The chip also uses computer vision engines to make low-light images better and keep images steady. VeriSilicon’s AI-ISP technology uses a special link to join the ISP and neural processing unit. This setup lets the chip process images fast and use less power, without the main CPU. Sony’s Intelligent Vision Sensor puts AI right inside the sensor. This lets the chip handle images and run AI models very fast, which helps keep data private and cuts down on sending data.

The table below lists some AI ISP chips and how they work in smart vision systems:

|

System / Example |

CNN Model(s) |

FPS |

Power Consumption (mW) |

Accuracy / Notes |

Key Features / Remarks |

|---|---|---|---|---|---|

|

Near-Sensor AI Vision System |

Mobilenet_V1, MobileNet_V2, Inception_v1 |

30 / 120 |

278.7 / 379.1 |

TOP1 accuracy 70% (Mobilenet_v1) |

3D integration tech reduces latency and power; flexible CNN model support; memory constrained (9 MB) with 8-bit quantization |

|

Firefly Teledyne |

Mobilenet V1 1.0 224, Inception v1 |

12 / 4 |

Low power (exact mW not specified) |

- |

1 TOPS NPU; lightweight system balancing performance and resource constraints |

|

JeVois |

Optimized MobileNet v1 0.5 (12 of 18 layers) |

7.6 |

N/A |

Model modified for integration |

Constrained system pushing CNN embeddability limits |

|

Facial Recognition System |

Custom network (4 conv + 1 FC layer) |

1 |

0.62 |

- |

Ultra-low power operation for facial recognition |

|

DroNet CNN Implementation |

Resnet8 variant (quantized) |

6 |

64 |

- |

Optimized for performance |

|

SCAMP-5 In-Sensor AI Vision |

Configurable CNN |

210 / 2260 |

~2000 |

- |

Very high FPS; in-sensor convolution and fast inference; size 35 mm × 25 mm |

These examples show that AI ISP chips can give high frame rates, use little power, and are very accurate. They work with many deep learning models and fit in devices with small memory.

Note: The 'Independent ISP Chip Market' report says AI ISP chips make images better, speed up work, and help image analysis be more correct. These chips are now used in many areas, like electronics, cars, security, and medical imaging.

Evolution of ISPs

The story of the image signal processor started with NASA’s moon trips. Early ISPs helped with images from CCD sensors. Later, when people switched from CCD to CMOS sensors, ISPs became even more important in cameras and phones. Over time, ISPs got smarter and faster.

The next big change came with artificial intelligence. Vision processors started working with ISPs. This teamwork made new things possible, like face recognition and scene study. Now, AI ISP chips do more than just make images look good. They can understand what is in a picture and make choices right away.

Today, AI ISP chips are very important in smart vision systems. They help devices see and understand the world, almost like people do. The growth of vision AI SoC technology shows how much ISPs have changed. These chips now put image processing, AI, and vision processor jobs together. This helps new uses in safety, automation, and fun.

Architecture and Integration

Key Components

AI ISP chips have a modular design. This helps them do many jobs in smart vision systems. Each chip has a few main parts. The image signal processor takes raw data from the camera sensor. It makes the image look better, removes noise, and fixes colors. The neural network processing unit runs AI models. These models help the system find objects and scenes. Some chips also have a vision processor. This part does extra jobs like tracking movement or understanding hard images.

A normal AI ISP chip links these parts so they work together. For example, VeriSilicon’s chip joins an ISP with a neural network unit. This chip uses RISC-V or Arm-based cores. It works with common interfaces like MIPI for image input and output. It also connects with UART, I2C, and SDIO. This flexible design lets the chip fit in many devices. It can go in smartphones or cars.

Performance is important for these chips. Designers focus on a few things:

-

High computing power for AI jobs

-

Fast memory access for image data

-

Low energy use to save battery

-

Flexibility to change or upgrade parts

Special chips like Google’s TPU or IBM’s TrueNorth show why these things matter. They give strong computing power and save energy. But sometimes they cost more or are less flexible. Hybrid designs try to balance these needs.

Note: Teams check AI models often to keep them fair and correct. They use good data and watch for bias. They also make sure the models work well over time.

Sensor and Software Integration

Smart vision systems need strong links between sensors and software. The camera sensor takes pictures. The ISP makes the images better and sends them to the AI models. The software uses this data to make choices or control other devices.

In real life, like in stores or factories, sensors work with software to track items or check products. For example, a smart vision system in a stadium can use a camera sensor and RFID tags. The system matches faces and items. It updates sales and inventory right away. This helps stores work faster and more correctly.

Some systems use many cameras and sensors at once. PC-based vision systems can do harder jobs because they have more power. They can connect many cameras, control robots, and run advanced software all together. Smart cameras are simpler. They are good for easy jobs but may need help to talk to other machines.

Keyence Vision Systems show how to connect sensors and software step by step:

-

The camera sensor takes a picture.

-

The ISP makes the image better.

-

The system checks for problems or measures parts.

-

The software decides what to do and tells other devices.

This process helps factories check every product fast and with high accuracy. The system can also talk to robots. This makes the whole line work better.

AI and ISP Synergy

Image Enhancement

AI and ISP work together to make images look better. The ISP gets the raw image from the sensor and cleans it up. It takes away noise, fixes colors, and makes details sharper. AI models look at the image and use smart steps to help even more. These models can make dark spots brighter and fix blurry parts. They can also help with glare or shadows.

Many vision AI systems use deep learning models like MobileNet v2, ResNet 50, and Vision Transformer. These models run on different hardware and use tricks like pruning and quantization. Engineers check how well these models work by looking at speed, accuracy, and slowdowns when models get smaller. For example:

-

A Performance Benchmark Harness (PBH) tests AI models on CPUs and GPUs.

-

Throughput tells how many images the system can handle each second.

-

Some models, like ResNet 50, stay accurate even when pruned by 25%.

-

Using a GPU can double the number of images processed compared to a CPU.

-

The PBH helps engineers balance speed, accuracy, and hardware limits.

Note: These benchmarks help developers pick the best AI models for making images better in smart vision systems. They can keep images clear while using less power and memory.

Real-Time Processing

Real-time processing is very important for smart vision systems. The ISP and AI must work fast together to handle every image right away. This speed matters for things like self-driving cars, augmented reality, and robots. The system must process images quickly to make choices without waiting.

The table below shows some key benchmarks for real-time processing in vision systems:

|

Metric |

Measured Value / Benchmark |

Context / Application |

|---|---|---|

|

5G Network Latency (Central Europe) |

7 to 12 milliseconds |

Real-world tests connecting to Exoscale Cloud; exceeds AI application latency requirements by ~270% |

|

5G Claimed Latency |

1 to 4 milliseconds |

Theoretical 5G latency claims vs. real-world performance |

|

6G Target Latency |

As low as 100 microseconds |

Enables real-time AI workloads such as robotics, remote surgery, autonomous vehicles |

|

6G Target Data Rate |

Up to 1 terabit per second |

Supports high-speed data transfer for AI training and decision-making |

|

Latency Requirements for AR |

Below 20 milliseconds |

To prevent motion sickness in augmented reality applications |

|

Video Frame Interval |

16.6 milliseconds (60 FPS) |

Minimum frame rate requirement for smooth video |

|

IoT Protocol Latency Overhead |

5 to 8 milliseconds |

Additional delay from protocols like MQTT, AMQP, CoAP impacting total latency |

|

Autonomous Vehicle Data Generation |

Up to 4 terabytes per day |

High bandwidth demand for sensor data, HD mapping, real-time analysis |

Smart vision systems need to meet these strict timing rules. The ISP gets the image ready, and the AI checks it in just milliseconds. This teamwork lets the system react to changes almost right away. For example, a car can see a person and stop in time. Augmented reality devices can update pictures fast, so there is no lag.

Scene Understanding

Scene understanding is a big part of new vision systems. The ISP and AI work together to make the image better and also understand what is happening. AI-based scene recognition uses deep learning to find objects, people, and actions in real time. The ISP gives a clear image, and the AI uses computer vision to study it.

Smart algorithms can spot faces, read license plates, or count things on a shelf. The system can follow movement and see patterns. This helps in security, stores, and factories. For example, a security camera can see strange actions and warn workers. In a factory, the system can check if products are made right.

Vision AI systems use these skills to make choices without people. The teamwork between ISP and AI lets the system see and understand the world, making smart vision possible.

Applications of AI ISP Chips

Smartphones and Consumer Devices

Smartphones use isp chips to make cameras work better. These chips help take clear pictures in different lighting. People can snap photos with bright and dark areas looking good. The isp works with the camera sensor to cut noise and sharpen images. Many phones now have cool features like real-time object finding and face spotting. These tools help focus on people or things fast. Phones with more than one camera use isp chips to switch between lenses. Users can pick wide, ultra-wide, or zoom lenses for photos. This gives more ways to take pictures. People want better cameras in fancy phones, so isp technology keeps growing.

Automotive and IoT

Cars use isp chips in driver help systems. Cars must look at images from many cameras and sensors. The isp helps the car see road signs, lanes, and other cars. Fast image work helps with safety tools like auto brakes and lane stay. Companies like MediaTek make isp chips for car screens and fun systems. These chips let cars use many screens and cameras. This makes driving safer and more fun. In IoT, smart home and city devices use isp chips to watch places. The chips help cameras see in the dark and add smart security and control.

Market reports say isp chips matter in cars, security, and gadgets. Asia-Pacific uses them most because they make lots of cars and electronics.

Security and Robotics

Security cameras use isp chips for good images day and night. The chips let cameras spot motion and faces. Security systems need isp chips for clear video all the time. Robots also use isp chips. Robots use cameras and isp chips to move around and see things. Factories use isp chips to check products and guide machines. The isp makes sure each camera image is sharp and ready for computer use. These uses show isp chips help smart vision in many areas.

Challenges and Future Trends

Technical Hurdles

Developers have many problems when making new isp chips for smart vision systems.

-

Making and designing isp chips costs a lot of money. This makes it hard for companies to sell cheap devices.

-

It takes a long time to finish new isp chip designs.

-

Using too much power is still a big issue. Mobile and small devices need isp chips that save energy.

-

Rules about security and privacy keep changing. Isp chips must follow these rules to keep user data safe.

-

Many companies want to make the best isp chips. This makes the market very tough.

-

Sometimes, there are not enough parts to build chips. This can slow down making new isp chips.

-

Adding smart algorithms to isp chips and saving energy is hard.

Developers need to balance speed, price, and safety for today’s vision systems.

Recent Innovations

In the last few years, isp chip technology has improved a lot. MediaTek now uses 2nm chips. These chips use up to 45% less power than old 5nm chips. More transistors fit in each chip, so isp chips work faster and better. MediaTek and NVIDIA work together to make special chips for big cloud jobs.

Other new things are:

-

Putting AI accelerators in isp chips for fast scene finding and better pictures.

-

More people want good cameras in phones and media devices, so the market is growing.

-

Companies spend more money on isp chips that save energy and mix software with hardware.

-

There is a push for isp chips that use less power to meet world energy rules.

-

Asia-Pacific is growing fast, with China, India, and Southeast Asia wanting more isp chips.

Future Outlook

The future for isp chips in new devices looks good. Experts think the isp chip market could grow from $4.4 billion in 2024 to $11 billion by 2034. Consumer electronics will stay on top, with over 35% of the market in 2024.

Isp chips will help with real-time things like face finding and object tracking in cars and security. New tech, like CMOS and Gallium Nitride, will make isp chips work better and use less energy. Faster internet from 5G and Wi-Fi 6 will need isp chips that can handle lots of data.

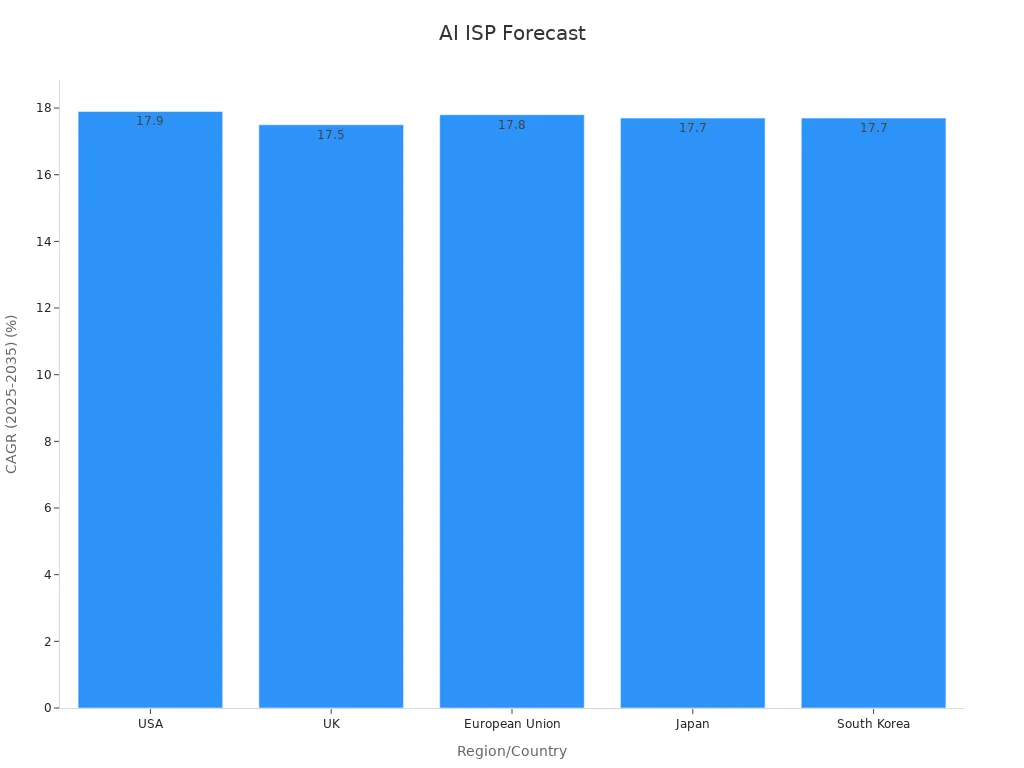

Places like the USA, UK, EU, Japan, and South Korea will see strong growth, with CAGRs close to 18%. Governments and private groups will help with research and making new isp chips.

|

Region/Country |

CAGR (2025-2035) |

Key Trends Supporting AI ISP Chip Integration |

|---|---|---|

|

USA |

Money goes to 3nm chips, AI cores, 5G, AR, and on-device ML for better images and processing |

|

|

UK |

17.5% |

Growth comes from AI phones, 5G, edge computing, and local chip research |

|

European Union |

17.8% |

Focus on making their own chips, AI, and custom SoCs for games and media |

|

Japan |

17.7% |

Needs fast mobile computing, mixed SoC designs, and government help for local chip making |

|

South Korea |

17.7% |

Leads in 3nm chips, AI SoCs, and mixing 5G modems and NPUs for top devices |

AI ISP chips change how smart vision systems work. These chips help devices see and understand the world almost like people.

-

Devices process images faster and use less power.

-

Smart cameras, cars, and robots now make better choices.

AI ISP chips will keep growing and improving. People will see them in more everyday technology soon. The future of visual computing looks bright with these chips.

FAQ

What makes AI ISP chips different from regular ISPs?

AI ISP chips use artificial intelligence to work with images. They can find objects, make pictures look better, and help devices decide things fast. Regular ISPs only fix colors and take away noise. AI ISP chips let devices see and understand more about the world.

Where do people use AI ISP chips most often?

People use AI ISP chips in phones, security cameras, cars, and robots. These chips help devices take clear photos, find faces, and follow moving things. Many smart home gadgets use them for better video and picture checking.

How do AI ISP chips help with low-light images?

AI ISP chips use smart steps to make dark spots brighter and cut noise. They help show more details in low-light places. This lets cameras take better pictures at night or inside. Users get sharper and brighter photos.

Will AI ISP chips replace human vision in the future?

AI ISP chips help machines see and understand pictures fast. They do not take the place of human eyes. People still need to watch and guide these systems. In the future, AI ISP chips will work with humans to make things safer and easier.