Edge Inference TCO Analysis Ascend 910 vs Leading Rivals

The edge AI hardware market is growing rapidly. Projections show it could reach USD 58.90 billion by 2030. This growth drive

The edge AI hardware market is growing rapidly. Projections show it could reach USD 58.90 billion by 2030. This growth drives competition. Several strong Ascend 310/910 alternatives exist for developers. Each one offers unique benefits for specific tasks.

Key Ascend 310/910 alternatives include:

- NVIDIA Jetson Series: It leads in raw performance for complex AI models.

- Google Coral Edge TPU: This platform excels in TCO for high-volume, low-power jobs.

- Qualcomm AI Engine: It is a top choice for excellent performance-per-watt.

Key Takeaways

- Different edge AI hardware fits different needs. NVIDIA Jetson offers high performance. Google Coral is best for low-cost, low-power tasks. Qualcomm AI Engine balances power and performance for mobile devices. Ascend 310/910 provides a good mix of performance and cost savings.

- Total Cost of Ownership (TCO) includes hardware cost, power use, and maintenance. It helps you choose the best long-term solution, not just the fastest one.

- Google Coral has the lowest TCO for simple, high-volume tasks. NVIDIA Jetson has the highest TCO but offers top performance. Ascend 310/910 balances performance and TCO well.

- Edge computing saves money compared to cloud solutions. It processes data locally. This reduces data transfer costs and speeds up response times.

BENCHMARKING ASCEND 310/910 ALTERNATIVES

Choosing the right edge hardware requires a deep dive into performance data. Simple spec sheets do not tell the whole story. This section benchmarks key Ascend 310/910 alternatives. We will analyze their performance across different AI models and workloads. The goal is to provide clear, data-driven insights for your specific project needs.

TEST METHODOLOGY AND METRICS

A fair comparison needs a standardized testing environment. Our analysis uses industry-accepted models, frameworks, and metrics. This approach ensures the results are both reliable and relevant.

AI Models Tested 🧪

We selected models that represent common edge AI tasks. These range from image classification to object detection and language processing.

- Image Classification: ResNet-50

- Object Detection: YOLOv5, YOLOv8 (Nano, Small), and SSD MobileNet V1

- Language Models: DeepSeek (for high-end edge devices)

Benchmarks for models like DeepSeek measure query latency and throughput. These metrics are vital for comparing performance in demanding workloads.

Software and Precision ⚙️

The software framework and data precision directly impact performance. We used common tools to get practical results.

- Frameworks: Our tests utilized ONNX Runtime and TensorFlow Lite. These frameworks are popular for their cross-platform compatibility and optimization features.

- Precision: We focused on INT8 and FP16 precision. These settings offer a balance between speed and accuracy. Frameworks like TensorFlow Lite and ONNX Runtime provide robust support for this type of quantization.

Note: INT8 (8-bit integer) quantization significantly speeds up inference and reduces model size. It is ideal for resource-constrained edge devices. FP16 (16-bit floating-point) offers better precision than INT8 with less computational cost than full FP32.

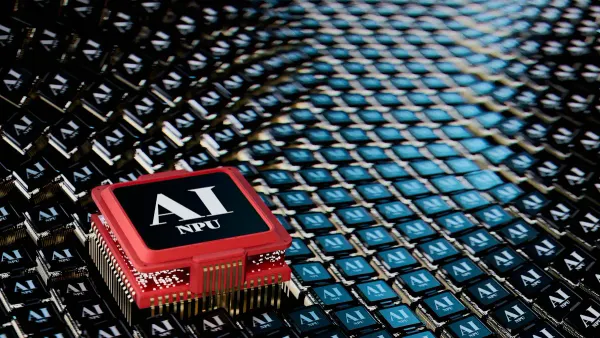

VS. NVIDIA JETSON SERIES

NVIDIA's Jetson series is a top performer for complex AI. The Jetson AGX Orin, for example, excels at running large vision models and even some language models at the edge. When raw performance is the primary goal, Jetson is a powerful choice among Ascend 310/910 alternatives.

However, this performance comes at a cost. High-end Jetson modules consume more power. We can see this trend when comparing the Ascend architecture to NVIDIA's data center GPUs. The NVIDIA H100 represents the performance ceiling, but its power needs are substantial. The Ascend 910C, while offering lower raw performance, presents a more power-efficient profile.

| Specification | Huawei Ascend 910C | NVIDIA H100 (SXM5) |

|---|---|---|

| Power Consumption (TDP) | ~310W | Up to 700W |

This difference in power draw is critical. A lower TDP means the Ascend 910C can deliver strong performance with better power efficiency. This is a key advantage for reducing operational costs in large-scale deployments.

VS. GOOGLE CORAL EDGE TPU

Google's Coral Edge TPU carves out a different niche. It is the champion of efficiency for specific tasks. The Coral TPU is designed to accelerate TensorFlow Lite models, particularly with INT8 quantization. It delivers excellent performance on models like MobileNet for a fraction of the power used by its competitors.

For high-volume deployments where power is limited and tasks are well-defined, the Coral is an excellent choice. It may not handle the complex models that a Jetson or high-end Ascend can. However, for classic edge AI tasks like simple object detection or keyword spotting, its low power consumption and low cost make it a leading TCO contender. This makes it one of the most cost-effective Ascend 310/910 alternatives for scaled-out projects.

VS. QUALCOMM AI ENGINE

The Qualcomm AI Engine focuses on performance-per-watt. It is integrated into Snapdragon chipsets, making it a leader in mobile and power-sensitive devices. This platform shines in scenarios where balancing performance with battery life is essential. The tight integration of its CPU, GPU, and dedicated AI hardware allows it to run models very efficiently.

Qualcomm's advantage is clear in many mobile-first applications.

- On-Device LLMs: It can run language models for composing emails or real-time translation without relying on the cloud.

- Camera Features: The AI Engine powers real-time video enhancements, background blur, and advanced scene detection.

- Gaming: It enables adaptive frame rates and real-time performance tuning for immersive gaming experiences.

For developers building applications for battery-powered devices, the Qualcomm AI Engine is one of the strongest Ascend 310/910 alternatives available. Its architecture provides a distinct advantage in power-constrained environments.

TOTAL COST OF OWNERSHIP (TCO)

Performance benchmarks tell only part of the story. A true evaluation must consider the Total Cost of Ownership (TCO). TCO includes every cost across a device's entire lifecycle. It moves the analysis from "which chip is fastest?" to "which solution provides the most value?". This financial perspective is critical for scaling any edge AI project from a prototype to a full deployment.

TCO COMPONENTS

Understanding TCO begins with breaking it down into its core parts. Three main categories define the total cost of an edge solution.

-

Initial Hardware Acquisition Cost (CAPEX) 💰: This is the upfront price of the hardware. It includes the edge devices, servers, and any necessary mounting or enclosures. The value of this hardware also depends on its expected operational life. Some devices require a refresh every few years, while others are built to last longer. A device's warranty can also impact long-term replacement costs.

-

Power & Cooling Costs (OPEX) ⚡: Edge devices consume electricity 24/7. This operational expense adds up quickly across a large deployment. Power costs for an edge AI project can make up 10-25% of the total TCO. This category also includes costs for cooling systems in dense deployments and any data backhaul fees for sending information to a central server or cloud.

-

Development & Maintenance Costs (OPEX) 🛠️: This component covers the human effort required. It includes the initial software development, model optimization, and ongoing maintenance. The maturity of a platform's software ecosystem and tools directly affects these costs. It also includes the expense of employees who manage the network and hardware.

COMPARATIVE TCO MODEL

To make these concepts concrete, we can model a hypothetical scenario. This model projects the 3-year TCO for a deployment of 100 edge devices. The analysis assumes an average electricity cost and standard development efforts. The costs are estimates designed to show the relative differences between platforms.

| Cost Component | Ascend 310/910 | NVIDIA Jetson | Google Coral | Qualcomm AI Engine |

|---|---|---|---|---|

| Hardware (CAPEX) | $120,000 | $150,000 | $6,000 | $75,000 |

| Power & Cooling (OPEX) | $18,000 | $27,000 | $1,500 | $4,500 |

| Dev & Maintenance (OPEX) | $40,000 | $30,000 | $25,000 | $35,000 |

| Total 3-Year TCO | $178,000 | $207,000 | $32,500 | $114,500 |

Analysis of the TCO Model

The table reveals clear winners for specific conditions.

-

Google Coral is the undisputed TCO champion for specialized tasks. Its extremely low hardware and power costs make it ideal for high-volume deployments of simple, quantized models. For projects where cost and power are the primary constraints, no other platform comes close.

-

The Qualcomm AI Engine offers the best TCO for mobile or power-sensitive applications. Its balance of low power draw and strong hardware integration keeps operational costs down. It provides a significant TCO advantage in battery-powered devices where efficiency is paramount.

-

The NVIDIA Jetson series has the highest TCO in this model. The high hardware cost and significant power consumption reflect its focus on maximum performance. This cost is justified in scenarios where raw processing power for complex models is the top priority and budget is a secondary concern.

-

The Ascend 310/910 finds a compelling middle ground. It offers performance that rivals high-end competitors but with greater power efficiency. This leads to a lower TCO than the Jetson series, making it a strong choice for large-scale deployments that need high performance without the associated high operational costs.

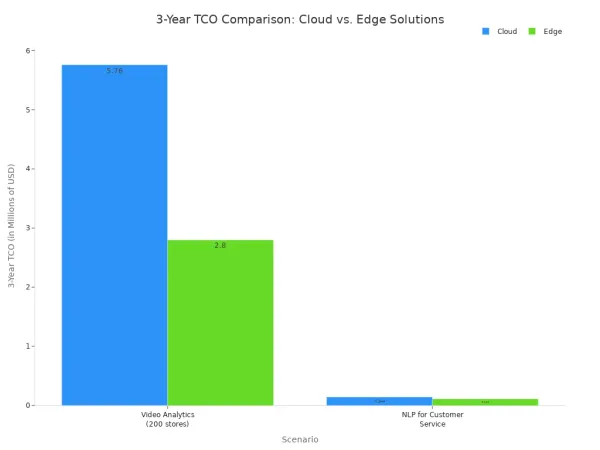

This edge hardware analysis is part of a larger trend. For many large-scale workloads, edge computing provides a clear TCO advantage over cloud-based solutions. Processing data locally avoids massive data transfer fees. A single high-definition video stream can generate terabytes of data monthly, and sending it all to the cloud is expensive. Edge devices process data on-site and send only small results, drastically cutting bandwidth costs.

The chart below shows how an edge solution's TCO can be significantly lower than a cloud solution's TCO for the same task over a three-year period.

Choosing the right edge hardware is the key to maximizing these savings. Each platform offers a different balance of performance, power, and cost, directly impacting the final TCO.

Choosing the right edge hardware depends on the project's needs. The application type, power limits, and cost-performance goals guide the best choice. GPUs deliver low-latency processing for real-time AI tasks. The data shows clear winners for different jobs.

Quick Recommendations 🏆

- NVIDIA Jetson: Choose for maximum performance on complex models.

- Google Coral: Select for the lowest TCO in high-volume, low-power tasks.

- Qualcomm AI Engine: Use for the best performance-per-watt in mobile devices.

- Ascend 310/910: Pick for a strong balance of high performance and lower TCO.

FAQ

What is Total Cost of Ownership (TCO)?

Total Cost of Ownership (TCO) measures the complete cost of a product. It includes three main parts:

- 💰 The initial purchase price (CAPEX).

- ⚡ Ongoing power and cooling costs (OPEX).

- 🛠️ Development and maintenance expenses (OPEX).

Why is INT8 precision important for edge AI?

INT8 precision makes AI models smaller and faster. This is very useful for edge devices with limited memory and power. It allows complex models to run efficiently without needing powerful, expensive hardware. This process helps lower the overall TCO.

Which hardware is best for complex AI models?

The NVIDIA Jetson series often provides the highest raw performance. It excels at running large, complex models for tasks like advanced video analytics or on-device language processing. This power makes it a top choice when performance is the main goal.

How does edge computing save money over the cloud?

Edge computing processes data locally. This action reduces the need to send large amounts of data to the cloud. It saves significant money on data transfer fees. Local processing also delivers faster response times for real-time applications.